Tessa Little | Originally Published: 24 January 2026

When Prime Minister Justin Trudeau stepped down and prorogued parliament on January 6th, 2025, the Digital Charter Implementation Act (Bill C-27), took its final breath. Bill C-27 contained the Artificial Intelligence and Data Act (AIDA), which aimed to ensure that the artificial intelligence (AI) systems used in Canada are safe and non-discriminatory. If passed, this bill would have been the first ever AI regulatory framework in Canada, but it was shelved after Justin Trudeau’s resignation. Now, a year later, we still do not have any single comprehensive AI regulatory legislation in Canada. To determine whether Canada should revive AIDA or move in a different direction, we can look to the European Union (EU), which has implemented a similar policy for governing artificial intelligence: The EU AI Act.

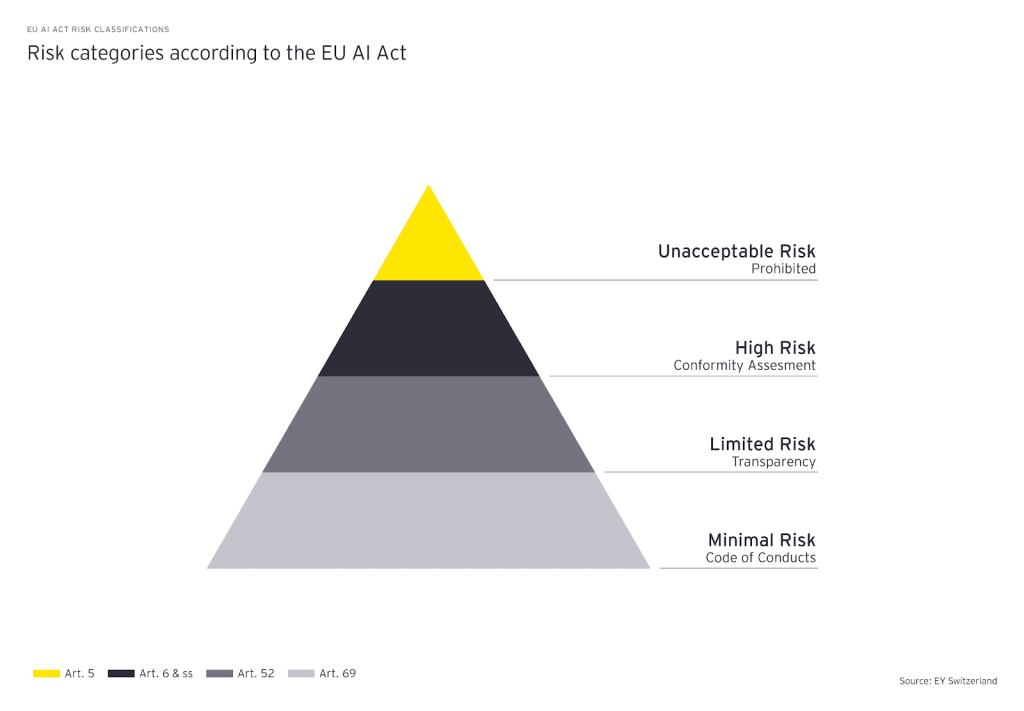

The EU AI Act was the world’s first ever comprehensive framework for regulating Artificial Intelligence. It entered into force in August of 2024, and its provisions would go into effect periodically over the following three years. The act regulates AI based on its degree of potential “risk,” or how likely it is to cause harm. There are four categories of risk that an AI system can fall into under the act: unacceptable applications, high risk, limited risk, and minimal risk. Unacceptable risk applications include the use of AI for social scoring, real-time biometric identification in public spaces, or intentionally manipulating users. AI uses deemed “unacceptable” are, quite literally, unacceptable and are therefore prohibited altogether. The act also imposes restrictions on “high risk” uses of AI, which pose significant threats to health, safety, or the fundamental rights of persons. An example of a high risk system would be a screening software used by employers; if the software has any significant biases, it could lead to widespread discrimination in the labour market. Under the act, these AI systems must undergo significant procedures before distribution to ensure accuracy, security, proper data governance, and transparency. All of these regulations aim to ensure that the system does not obstruct individuals’ rights or safety.

The majority of AI systems are classified as “limited” or “minimal” risk. For these categories, the regulations under the act mostly involve the distributor having transparency obligations to users. Limited risk AI systems must inform users that they are interacting with an AI system, allowing them to make informed choices. This category includes AI applications that make it possible to generate or manipulate images, sound, or videos, like deepfakes. The minimal risk includes AI systems used for video games or spam filters. These minimal risk systems are not regulated, and EU Member States cannot impose additional domestic regulations. The following infographic shows the different levels of risk and their associated regulations:

EY Switzerland

This risk-based assessment of AI systems was developed in order to create a framework that regulates AI based on its societal impact, guaranteeing compliance in technology before distribution so that technology is developed that complies before it is distributed. Additionally, by setting the world’s first comprehensive AI rules, it forces non-European firms to adapt if they want access to their market, which potentially exports their AI values globally.

However, there are also drawbacks to this model. Mainly, it creates more costs associated with the development of AI because there are now new evaluations at every step of the development process. Consequently, smaller firms are discouraged from entering these markets due to regulatory costs while bigger firms can afford to stay in the market. Ultimately, this policy creates more barriers to entry which decreases competition in the market and slows down the pressure to innovate, especially for higher risk applications. Therefore, the act may actually impede AI development by making it a less competitive market.

The proposed AIDA in Canada would follow a framework comparable to the EU AI Act, as it intended to regulate “high impact” AI uses. AIDA would provide a framework for assessing how the high impact uses would be determined, namely by their potential to cause harm or their bias. For example, systems that moderate content on social media platforms have high potential for bias, so they are deemed high-impact. As such, under AIDA, these systems would be regulated more heavily. According to the AIDA companion document, after a high-impact use of AI is identified, there would be obligations to “identify, assess, and mitigate risks of harm or biased output prior to a high-impact system being made available for use.” To do this, systems would be required to be overseen by a human, they must be transparent, they must be fair, safe, able to be held accountable, valid, and robust. This way, there is a procedure for each stage of AI development to ensure that the final product is non-discriminatory and safe. Thus, this framework is very similar to that of the EU since they both require assessment at all stages and only regulate higher risk systems.

Since the two acts are so similar, it is clear that the Canadian one would have similar benefits and drawbacks as the EU AI act. On one hand, it has the same risk-centered focus, evaluated on its societal impact, which means that it creates more ethical AI development since it is. On the other hand, it has the same consequences from the lack of competition. As such, Canada could improve on this by rewriting the AIDA in such a way that the government provides more support, mainly through subsidies, for smaller companies to stay in the market and maintain competition..

Overall, the EU AI Act shows that there are ways to create a comprehensive AI regulation that is focused on AI’s risk and benefits to society. The deceased AIDA followed this framework by making it so that AI needed to be screened before being implemented in a market. With the right reforms, Canada has an opportunity to become a global leader in AI by writing new legislation that foments responsible and competitive AI development.

References

1. Balcioğlu, Yavuz Selim, et al. “A Turning Point in AI: Europe’s Human-Centric Approach to Technology Regulation.” Journal of Responsible Technology, vol. 23, Sept. 2025, p. 100128, http://www.sciencedirect.com/science/article/pii/S2666659625000241, https://doi.org/10.1016/j.jrt.2025.100128.

2. Bentata, Pierre, and Nicolas Bouzou. “The Adoption of AI and the Threat to Innovation: Lessons from Europe | Montreal Economic Institute.” Iedm.org, 8 Jan. 2026, www.iedm.org/the-adoption-of-ai-and-the-threat-to-innovation-lessons-from-europe/

3. European Parliament. “EU AI Act: First Regulation on Artificial Intelligence.” European Parliament, 8 June 2023, www.europarl.europa.eu/topics/en/article/20230601STO93804/eu-ai-act-first-regulation-on-artificial-intelligence.

4. Ferguson, Christopher, and Dongwoo Kim. “Prorogation’s Digital Impact: Canada’s Digital Bills Set to Die on the Order Paper.” Fasken.com, 14 Jan. 2025, www.fasken.com/en/knowledge/2025/01/prorogations-digital-impact.

5. Government of Canada. “The Artificial Intelligence and Data Act (AIDA) – Companion Document.” Ised-Isde.canada.ca, Government of Canada, 2023, ised-isde.canada.ca/site/innovation-better-canada/en/artificial-intelligence-and-data-act-aida-companion-document.

6. Meier, Konrad, and Roger Spichiger. “The EU AI Act: What It Means for Your Business.” EY, 15 Mar. 2024, www.ey.com/en_ch/insights/forensic-integrity-services/the-eu-ai-act-what-it-means-for-your-business.

7. Siegmann, Charlotte, and Markus Anderljung. The Brussels Effect and Artificial Intelligence. Centre for the Governance of AI, Aug. 2022. https://cdn.governance.ai/Brussels_Effect_GovAI.pdf.